urban liebel no Twitter: "CPU, GPU, TPU and now HIVE, makes total sense ( and no it wasn't me , I swear ;-) https://t.co/PM1VmEm8TT" / Twitter

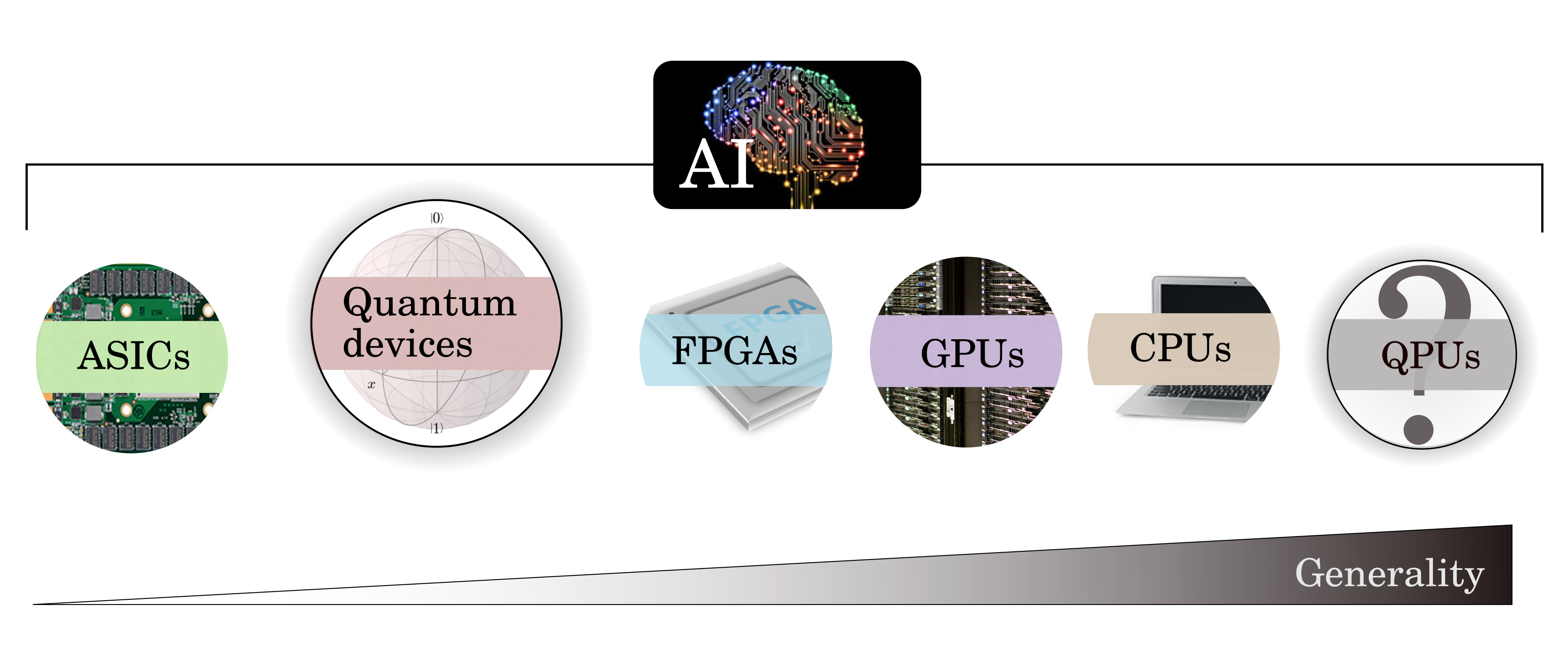

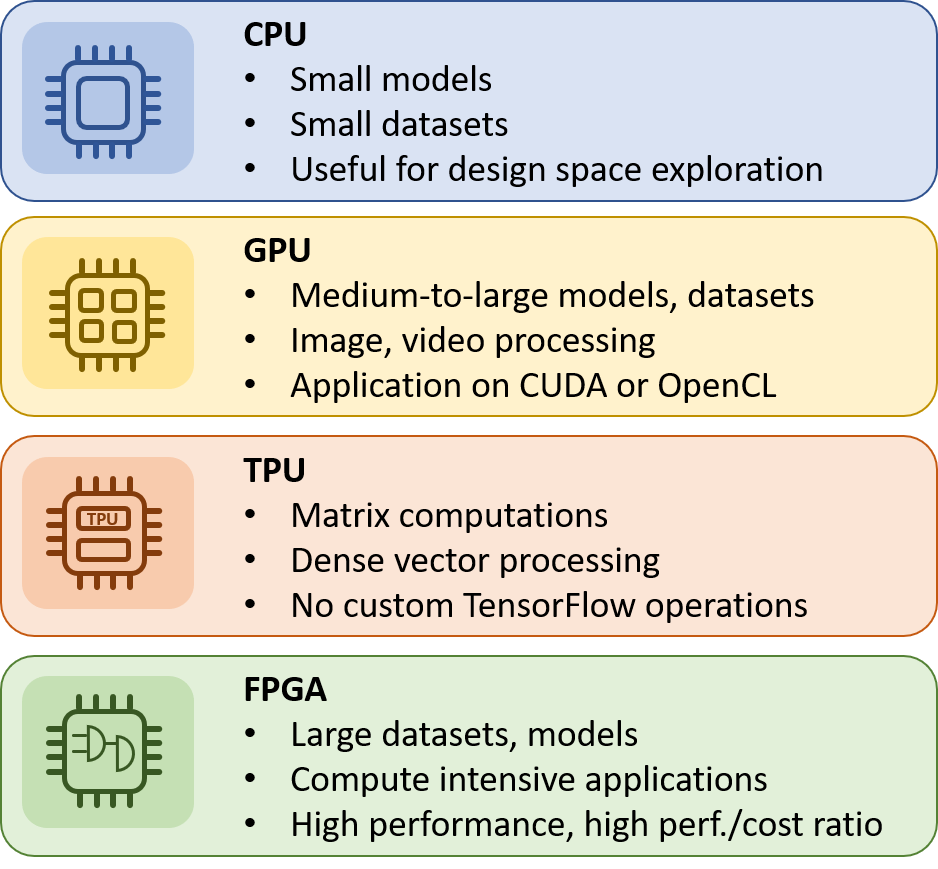

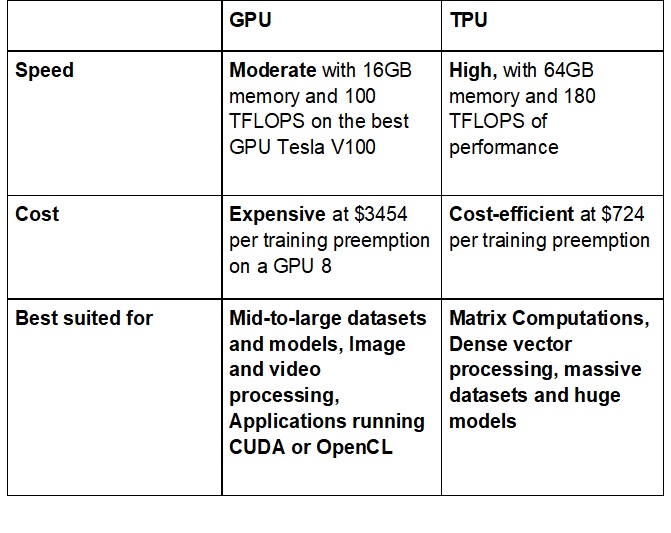

CPU / GPU/ TPU — ML perspective. As a Machine learning Enthusiast who… | by Apeksha Gaonkar | Analytics Vidhya | Medium

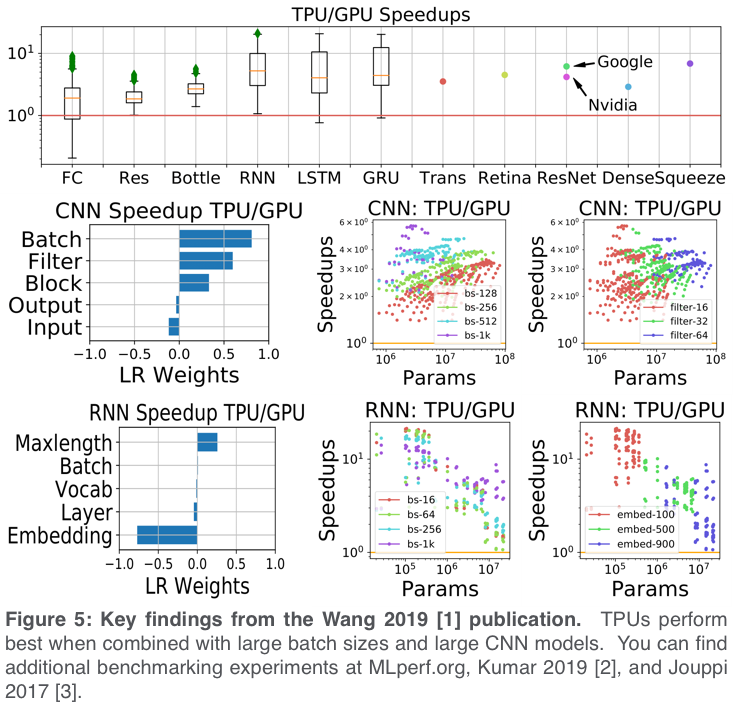

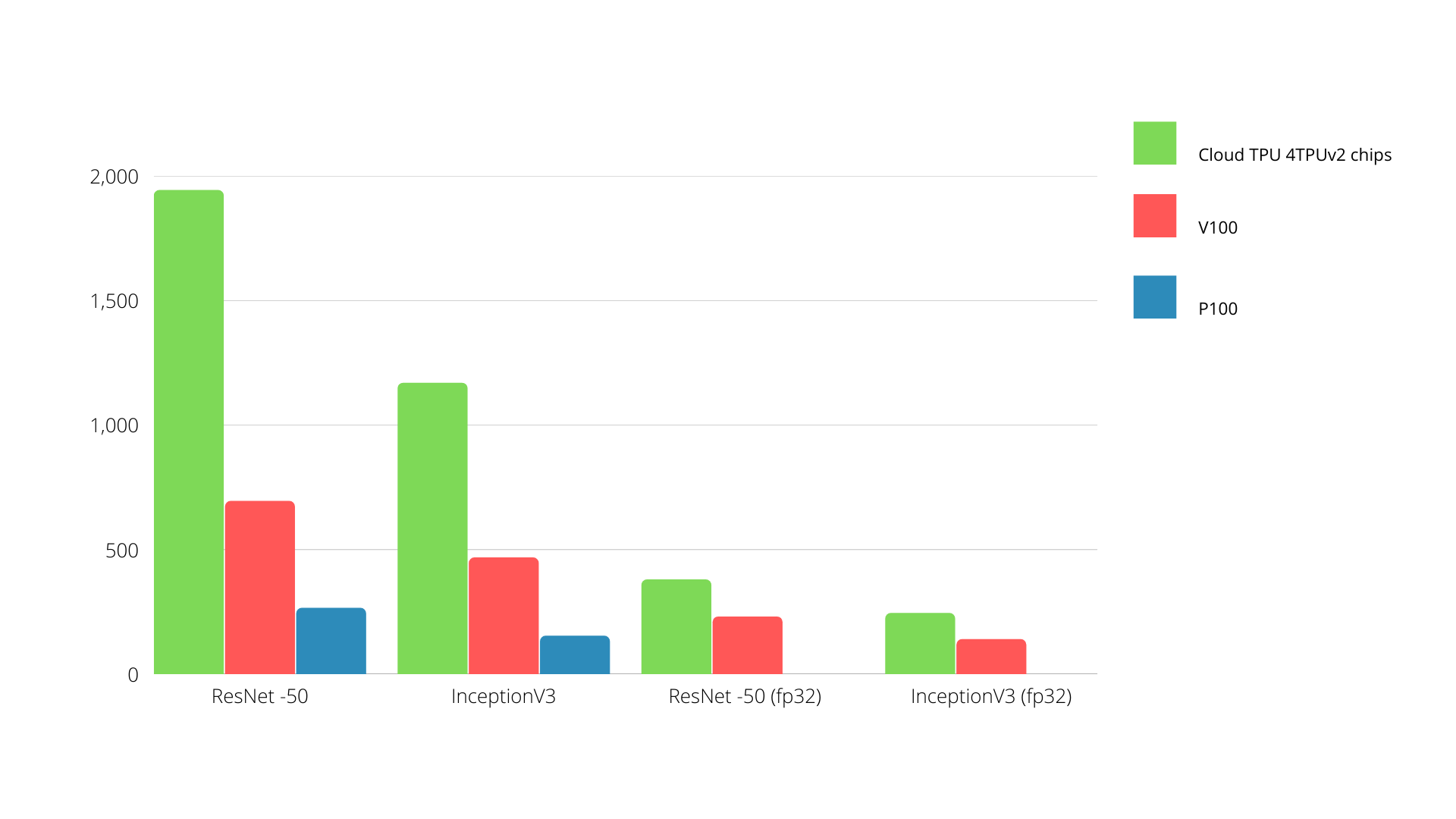

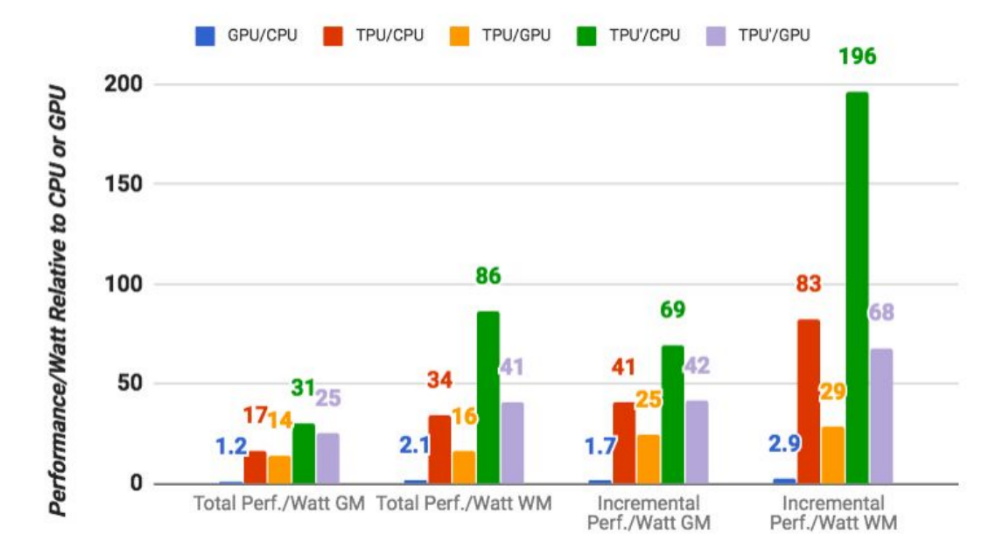

Google says its custom machine learning chips are often 15-30x faster than GPUs and CPUs | TechCrunch